The New York Times

The New York Times Our impact

- We raised awareness about algorithmic amplification

- We are influencing the current regulation changes

- We prompted YouTube to change their algorithm

Our impact on public awareness

The New York Times

The New York Times  The Telegraph

The Telegraph  The Washington Post

The Washington Post  The Washington Post

The Washington Post  MIT Technology Review

MIT Technology Review Our impact on regulation

Our publications have been cited by regulators to advocate for more transparency on algorithmic amplification, both regarding the behavior and the design of the algorithm.

The EU Commission consulted us while drafting the Digital Services Act and Digital Markets Act, which promises to deeply reform the legal framing of online platforms, both in Europe and globally.

In the US, our analysis of YouTube's promotion of conspiratorial content was a central argument in a Congressional Testimony entitled: “A Country in Crisis: How Disinformation Online is Dividing the Nation”.

In January 2021, US Congress cited our work in a formal letter to the CEOs of Google and YouTube.

Our impact on YouTube

-

AlgoTransparency has been providing more transparency on which Youtube videos are the most recommended.

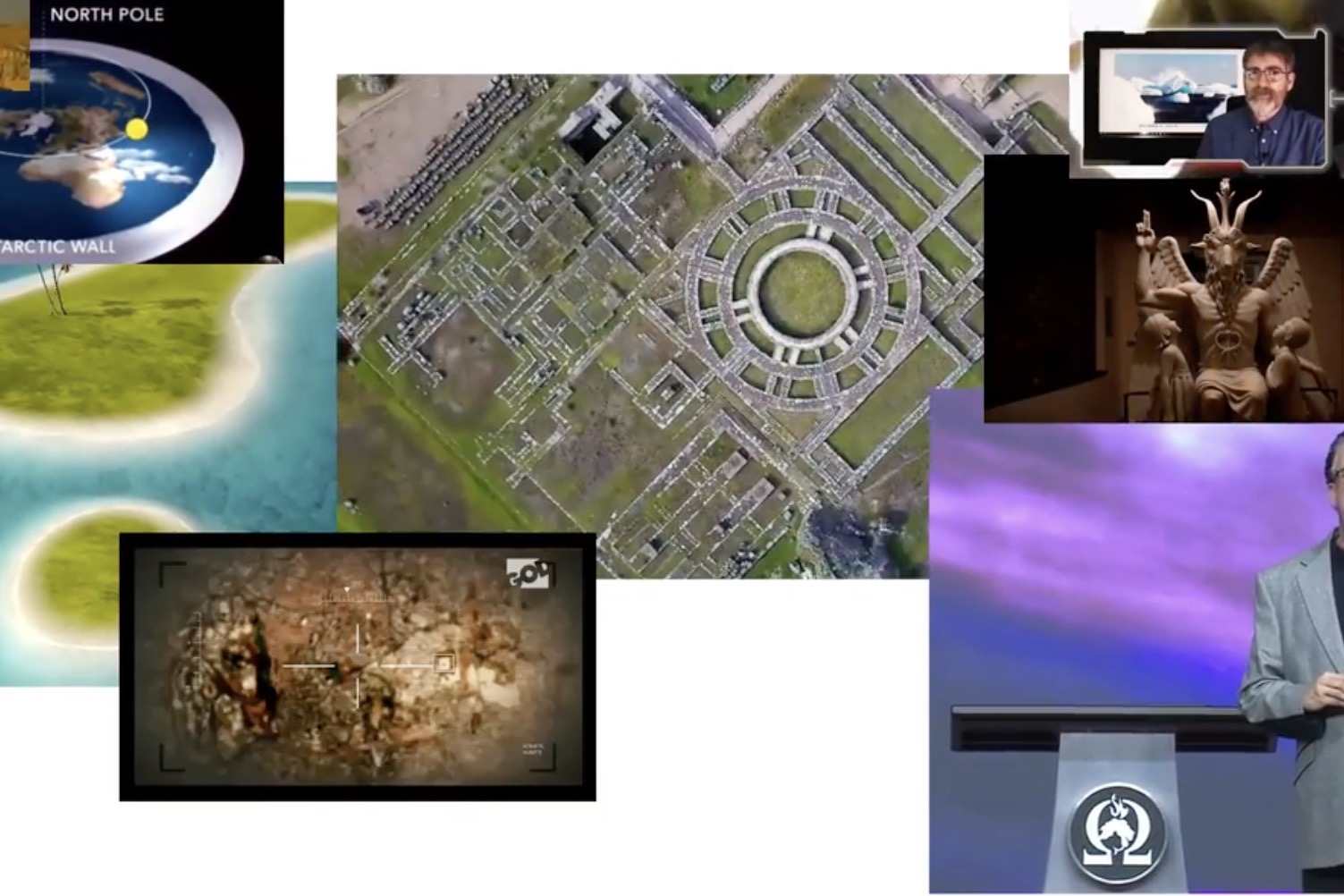

The past two years have seen a number of important milestones achieved toward a safer YouTube. These are the result of a large concerted effort by journalists, academics, and NGOs. When featured in the media, we have seen our work illicit a positive reaction from YouTube. First it put wikipedia snippets under debated. Then, they took more than 30 measures to decrease the propagation of harmful disinformation, which resulted in a decrease of it by 70%.

The timeline below illustrates the most notable public steps on our journey.

-

2018

AlgoTransparency

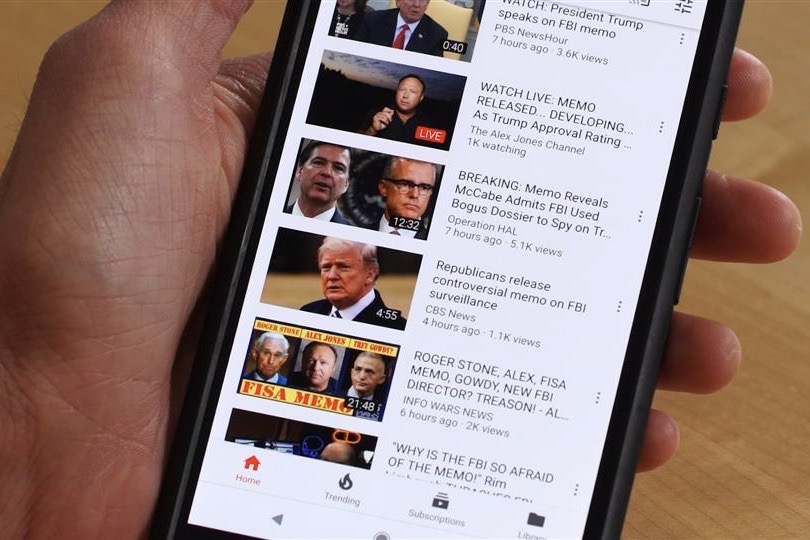

Feb. 02 2018 'Fiction is outperforming reality': how YouTube's algorithm distorts truth The Guardian The first article in The Guardian featuring our work about the 2016 US election and the promotion of harmful lies on YouTube. The Guardian communicated extensively with Google.

The Guardian The first article in The Guardian featuring our work about the 2016 US election and the promotion of harmful lies on YouTube. The Guardian communicated extensively with Google.Youtube

On the same day, Google announces in the WSJ that it will implement new informational labels to provide context in an effort to fight Conspiracy and Propaganda. -

AlgoTransparency

A WSJ investigation, to which Guillaume contributed, illustrates that YouTube is pushing people towards unexpected content.

-

-

2019

Algotransparency

Guillaume was named in the WIRED25 list of "people racing to save us" for his work denouncing harmful conspiracy promoted by YouTube.Youtube

YouTube announces for the first time that they will actively be "reducing recommendations of borderline content and content that could misinform users in harmful ways—such as videos promoting a phony miracle cure for a serious illness, claiming the earth is flat, or making blatantly false claims about historic events like 9/11."

- Youtube official blog announced that their measures to reduce the amplification of harmful content led to a 70% average drop in watch time of this content coming from non-subscribed recommendations in the U.S.

-

2020

Algotransparency

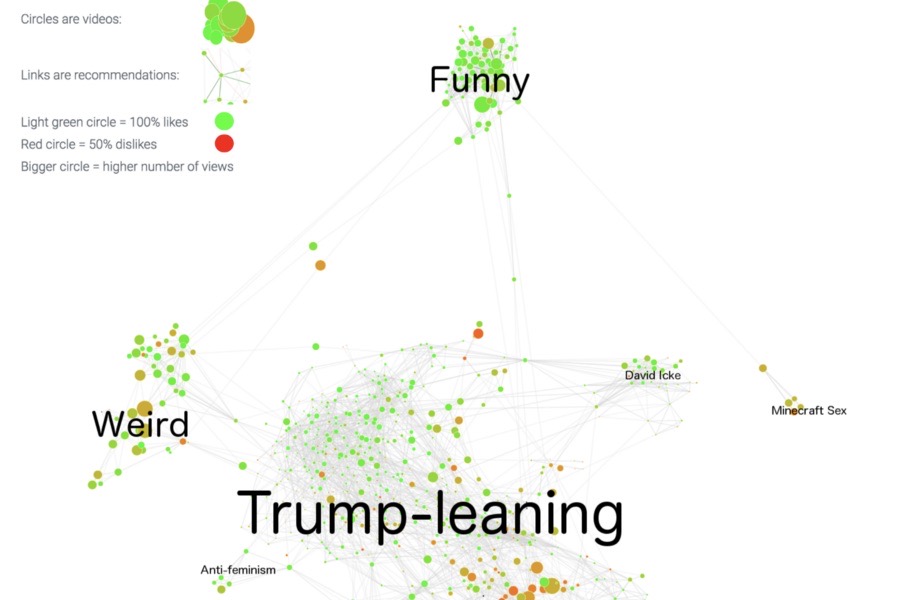

Our analysis of the decrease in conspiracy theories is published in the New York Times. One of the most prominent conspiracists that we expose as beeing massively promoted by YouTube is David Icke.Youtube

David Icke, the most popular and prominent YouTuber depicted in our conspiracy analysis, is banned from YouTube.

- YouTube announces its continued effort to stop promoting conspiracies, and shows that these have decreased the promotion of prominent QAnon-related channels by 80%.

-

Algotransparency

We monitor how YouTube's recommender system has evolved, as compared to the 2016 elections, and publish an analysis with the NewYork Times. We notice significant changes in the most recommended channels, in favor of authoritative news channels over independent YouTubers. The propensity to promote polarizing content rather than reconciling views remains.

- Guillaume tweets that YouTube is promoting the voter fraud narrative by the millions, in particular from channels NTD and Newsmax TV.

Youtube

Youtube bans new videos about voter fraud.

Medium

Medium

Netflix

Netflix

BBC

BBC

Wired

Wired

Bloomberg

Bloomberg

NBC News

NBC News

New York Magazine

New York Magazine

Vice

Vice

The Wall Street Journal

The Wall Street Journal

Twitter

Twitter